-

Analysis & Computation

297 -

Development & API

2 -

Development Tools

1 -

Execution & Performance

1,002 -

Feed management

1 -

HW Connectivity

112 -

Installation & Upgrade

262 -

Networking Communications

181 -

Package creation

1 -

Package distribution

1 -

Third party integration & APIs

277 -

UI & Usability

5,358 -

VeriStand

1

- New 2,980

- In Development 2

- In Beta 0

- Declined 2,621

- Duplicate 702

- Completed 325

- Already Implemented 112

- Archived 0

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report to a Moderator

Smart Image Acquisition

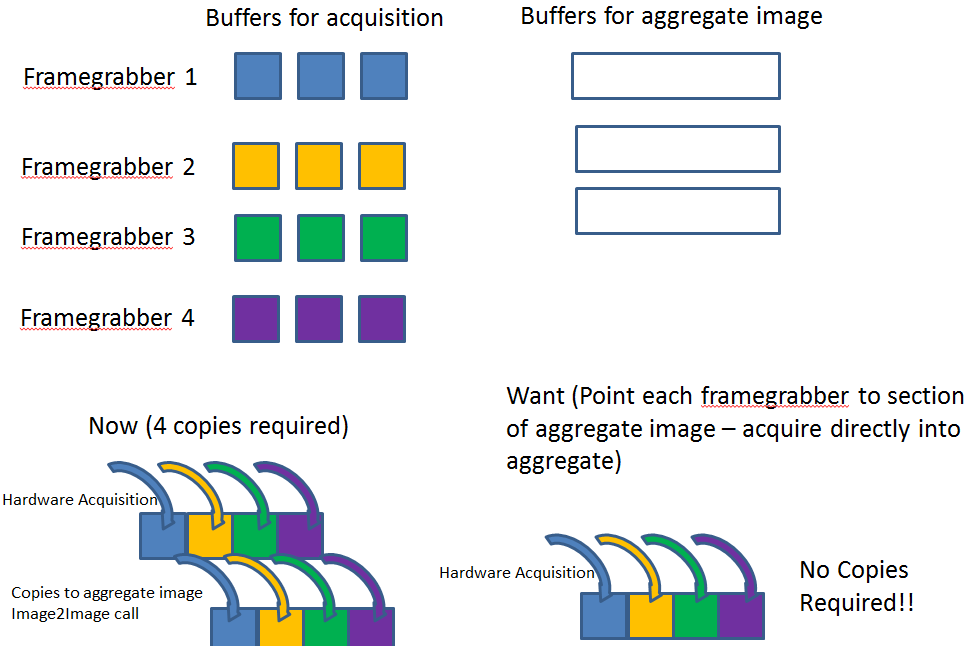

Right now if you want to acquire from multiple cameras and then stitch these images together in an aggregate image for processing you must allocate individual buffers for each frame grabber and buffers for the aggregate. In addition, you must perform N image copies where N represents the number of frame grabbers in your system. This is just dead overhead.

What I propose is to change the driver so that each frame grabber can point to a portion of a larger aggregate image. Then as each image comes in the aggregate image is populated directly. This save N memory copies and N x M image buffers (where M is the number of buffers in a ring acquisition). This would be a tremendous performance improvement. The figure below shows the idea.

- Tags:

- imaq

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Any idea that has received less than 8 kudos within 8 years after posting will be automatically declined.